Anthropic Colossus: more compute for Claude

Anthropic signed a compute deal with SpaceX to use Colossus 1: 300+ MW and 220,000+ NVIDIA GPUs. Higher Claude Code limits, no peak-hour throttling on Pro/Max, and bigger Opus API rate limits. What it means for businesses building with AI.

Quick take

Anthropic has signed a deal with SpaceX to use the compute capacity of Colossus 1, the large data center associated with the xAI ecosystem. The interesting part isn't just the collaboration between rival AI companies — it's what the move reveals: the AI bottleneck is no longer just about having better models, but about having enough infrastructure to serve them without friction.

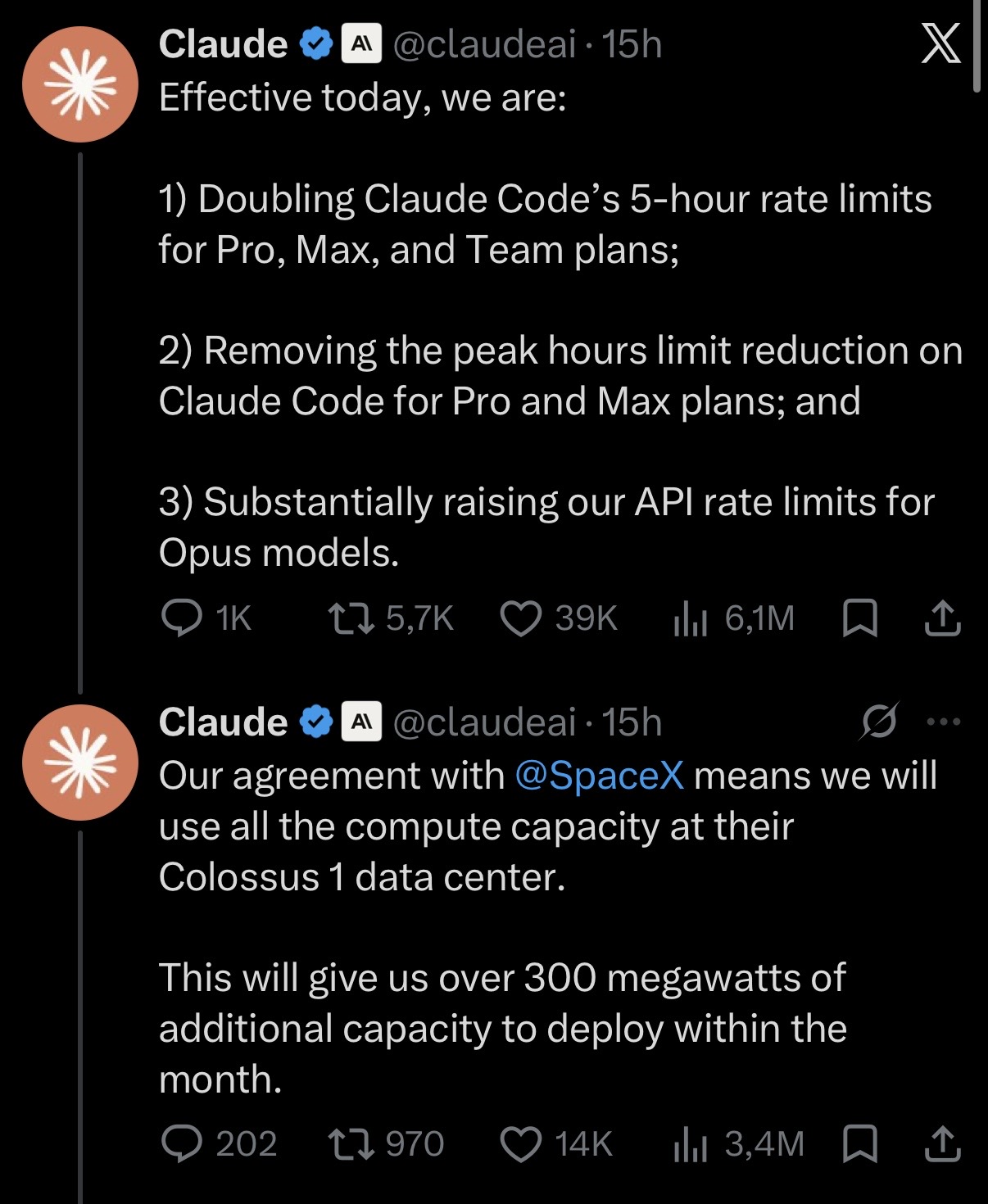

In practice, Anthropic is already turning this into three concrete changes for users: higher limits for Claude Code, removal of peak-hour throttling on Pro and Max plans, and significantly higher rate limits for the Claude Opus API.

What Anthropic announced

Anthropic has announced a deal with SpaceX to use the entire compute capacity of the Colossus 1 data center. According to the official post, this gives Anthropic access to more than 300 MW of new capacity and over 220,000 NVIDIA GPUs, which will be brought online during this month.

The shallow read is that Anthropic is leaning on infrastructure tied to Elon Musk's ecosystem. But that's not the most interesting part.

The point is that Anthropic needs more compute because Claude no longer competes only as a model: it competes as a product used by developers, technical teams, enterprises and heavy users who expect constant availability.

What changes immediately for Claude users

Anthropic announced three changes effective the same day as the announcement:

- Doubles the five-hour Claude Code limits for Pro, Max, Team and Enterprise per seat.

- Removes peak-hour throttling for Claude Code on Pro and Max accounts.

- Substantially increases API rate limits for Claude Opus models.

This doesn't mean Claude automatically gets a new model, nor that the interface changes, nor that there's any direct integration with Grok. The change is more operational: more capacity available to sustain real usage.

For Pro and Max users, the promise is straightforward: less friction, fewer cutoffs from limits, and more headroom to use Claude as a daily working tool.

Why this matters for companies and SMBs

For a business, the value of an AI tool doesn't just depend on how smart it looks in a demo. It depends on whether it can be used reliably inside real processes.

When a team uses Claude Code to review repositories, generate tests, document modules, analyze errors or accelerate development tasks, usage limits become a productivity constraint. If the tool cuts out mid-flow, it stops being an operational advantage and becomes an awkward dependency.

That's why this news matters: Anthropic is reinforcing the layer the user normally doesn't see, but which determines the final experience.

More compute means more inference capacity, more headroom for heavy users, and less pressure on product limits.

The new AI race: infrastructure, energy and availability

Over the last few years, the public debate has focused on which model is smartest: GPT, Claude, Gemini, Grok or Llama. But the next phase of the competition looks less flashy and more strategic.

The question is no longer just who has the best model.

The question is who can run it at scale, with enough energy, hardware, data centers and infrastructure deals.

That's where Colossus 1 comes in. Its value isn't in being an attractive brand, but in representing a trend: the major AI labs need massive amounts of compute to train, serve, fine-tune and scale their products.

What hasn't been announced

It's worth separating facts from interpretation.

Anthropic has not announced a price cut.

Nor has it announced a new Claude model directly tied to this deal.

And there's no product-level integration between Claude and Grok for the end user.

What's confirmed is more concrete and, for heavy users, probably more important: more compute capacity to reduce restrictions and improve availability.

E2D take

At E2D the read is clear: enterprise AI isn't won with prompts or with headlines about new models. It's won when the technology can be integrated into real processes without breaking the workflow.

For an SMB, this lands as a simple idea:

If an AI tool is going to be part of sales, support, development, operations or internal analysis, it needs to be reliable, available and scalable.

The Anthropic and Colossus announcement is relevant because it points exactly at that layer: the infrastructure that lets AI stop being an occasional tool and become a stable part of daily work.

What companies should be watching now

First, if they use Claude Code or the Anthropic API, they should review their current limits and consider whether the new headroom enables more ambitious use cases.

Second, if they're comparing AI providers, they shouldn't evaluate response quality alone. They should also look at availability, limits, pricing, enterprise support, inference regions and integration capability.

Third, if they're designing AI automations, they should assume the provider's infrastructure is a critical variable. An automated flow doesn't just need good responses; it needs consistency.

Conclusion

The Anthropic–SpaceX deal around Colossus 1 isn't just an infrastructure story. It's a signal of where AI is heading.

Models will keep mattering, but competitive advantage is starting to depend just as much on the ability to serve them at scale.

More compute isn't a technical detail. It's what allows tools like Claude to go from being powerful in isolated tests to being useful in real operations.

And for businesses, that's the difference between trying AI and building with AI.

Frequently asked questions

Is Claude getting a new model because of this deal?

No new Claude model directly tied to this deal has been announced. The announcement focuses on compute capacity and usage limits.

Which users will notice the change first?

Mainly heavy Claude Code users, Pro and Max plans, Team and Enterprise per-seat customers, and organizations using the Claude Opus API.

Does this mean Claude and Grok are integrating?

No. No integration between Claude and Grok has been announced. The deal is about infrastructure and compute capacity.

Why is compute so important in AI?

Because AI models need significant capacity to train, fine-tune and respond to millions of users. Without enough infrastructure, you get limits, saturation and usage restrictions.

Sources

- Anthropic: Higher limits announcement

- xAI: Anthropic compute partnership

¿Hablamos de tu proyecto?

Interested in automating your business?

Book a free demo and discover how we can help you